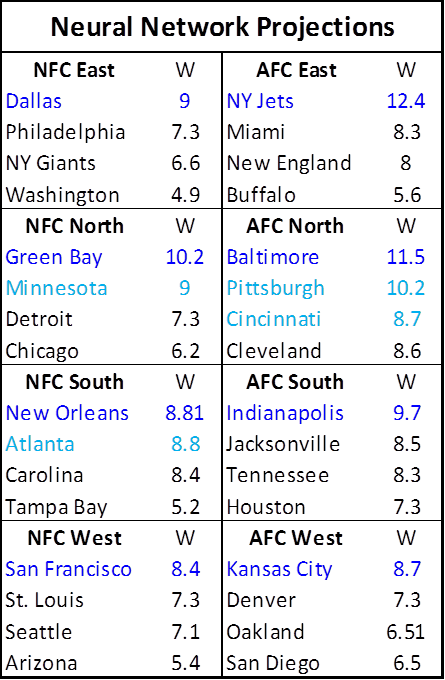

Before the start of the 2010 NFL season, I used a very simple neural network to create this set of last-second projections:

Clearly, some of the predictions worked out better than others. E.g., Kansas City did manage to win their division (which I never would have guessed), but Dallas and San Francisco continued to make mockeries of their past selves. We did come dangerously close to a Jets/Packers Super Bowl, but in the end, SkyNet turned out to be more John Edwards than Nostradamus.

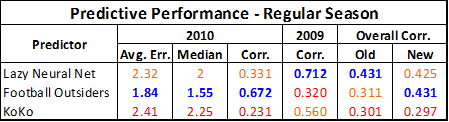

From a prediction-tracking standpoint, the real story of this season was the stunning about-face performance of Football Outsiders, who dominated the regular season basically from start to finish:

Note: Average and Median Errors reflect the difference between projected and actual wins. Correlations are between projected and actual win percentages.

Not only did they almost completely flip the results from 2009, but their stellar 2010 results (combined with the below-average outing of my model) actually pushed their last 3 seasons slightly ahead of the neural network overall. This improvement also puts Koko (.25 * previous season’s wins + 6) far in FO’s rearview, providing further evidence that Koko’s 2009 success was a blip.

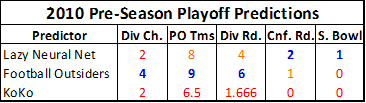

If we use each method’s win projections to project the post-season as well, however, things turn out a bit differently. Football Outsiders starts out in a strong position, having correctly picked 4 of 8 division champions and 9 of 12 playoff teams overall (against 2 and 8 for the NN respectively), but their performance worsens as the playoffs unfold:

The neural network correctly placed Green Bay in the Super Bowl and the Jets into the AFC championship game, while FO’s final 4 were Atlanta over Green Bay and Baltimore over Indianapolis.

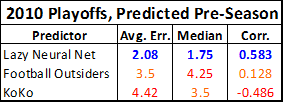

Moreover, if we use these preseason projections to pick the overall results of the playoffs as they were actually set, the neural network outperforms its rivals by a wide margin:

Note: The error measured in this table is between predicted finish and actual finish. The Super Bowl winner finishes in 1st place, the loser in 2nd place, conference championship losers each tie for 3.5th place (average of 3rd and 4th), divisional losers tie for 6.5th (average of 5th, 6th, 7th, and 8th), and wild card round losers tie for 10.5th (average of 9th, 10th, 11th, and 12th).

This minor victory will give me some satisfaction when I retool the model for next season—after all, this model is still essentially based on a small fraction of the variables used by its competitor, and neural networks generally get better and better with more data. On balance, though, the season clearly goes to Football Outsiders. So credit where it’s due, and congratulations to humankind for putting the computers in their place, at least for one more year.