Last season I did some analysis of rookie starting quarterbacks and which of their stats are most predictive of future NFL success. One of the most fun and interesting results I found is that rookie interception % is a statistically significant positive indicator—that is, all else being equal, QB’s who throw more interceptions as rookies tend to have more successful careers. I’ve been going back over this work recently with an eye towards posting something on the blog (coming soon!), and while playing around with examples I stumbled into this:

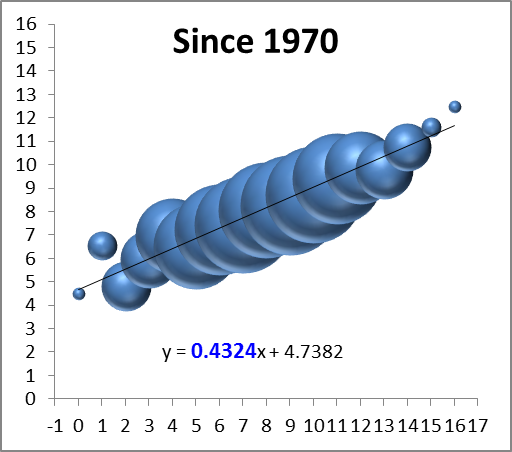

Note: Data points are QB’s in the Super Bowl era who were drafted #1 overall and started at least half of their team’s games as rookies (excluding Matthew Stafford and Sam Bradford for lack of ripeness). Peyton Manning and Jim Plunkett each threw 4.9% interceptions and won one Super Bowl, so I slightly adjusted their numbers to make them both visible, though the R-squared value of .7287 is accurate to the original (a linear trend actually performs slightly better—with an R-squared of .7411—but I prefer the logarithmic one aesthetically).

Notice the relationship is almost perfectly ironic: Excluding Steve Bartowski (5.9%), no QB with a lower interception percentage has won more Super Bowls than any QB with a higher one. Overall (including Steve B.), the seven QB’s with the highest rates have 12 Super Bowl rings, or an average of 1.7 per (and obv the remaining six have none). And it’s not just Super Bowls: those seven also have 36 career Pro Bowl selections between them (average of 5.1), to just seven for the remainder (average of 1.2).

As for significance, obviously the sample is tiny, but it’s large enough that it would be an astounding statistical artifact if there were actually nothing behind it (though I should note that the symmetricality of the result would be remarkable even with an adequate explanation for its “ironic” nature). I have some broader ideas about the underlying dynamics and implications at play, but I’ll wait to examine those in a more robust context. Besides, rank speculation is fun, so here are a few possible factors that spring to mind:

- Potential for selection effect: Most rookie QB’s who throw a lot of interceptions get benched. Teams may be more likely to let their QB continue playing when they have more confidence in his abilities—and presumably such confidence correlates (at least to some degree) with actually having greater abilities.

- The San Antonio gambit: Famously, David Robinson missed most of the ’96-97 NBA season with back and foot injuries, allowing the Spurs to bomb their way into getting Tim Duncan, sending the most coveted draft pick in many years to a team that, when healthy, was already somewhat of a contender (also preventing a drool-worthy Iverson/Duncan duo in Philadelphia). Similarly, if a quality QB prospect bombs out in his rookie campaign—for whatever reason, including just “running bad”—his team may get all of the structural and competitive advantages of a true bottom-feeder (such as higher draft position), despite actually having 1/3 of a quality team (i.e., a good quarterback) in place.

- Gunslingers are just better: This is my favorite possible explanation, natch. There are a lot of variations, but the most basic idea goes like this: While ultimately a good QB on a good team will end up having lower interception rates, interceptions are not necessarily bad. Much like going for it on 4th down, often the best win-maximizing choice that a QB can make is to “gamble”—that is, to risking turning the ball over when the reward is appropriate. This can be play-dependent (like deep passes with high upsides and low downsides), or situation-dependent (like when you’re way behind and need to give yourself the chance to get lucky to have a chance to win). E.g.: In defense of Brett Favre—who, in crunch time, could basically be counted on to deliver you either a win or multiple “ugly” INT’s—I’ve quipped: If a QB loses a game without throwing 4 interceptions, he probably isn’t trying hard enough. And, of course, this latter scenario should come up a lot for the crappy teams that just drafted #1 overall: I.e., when your rookie QB is going 4-12 and isn’t throwing 20 interceptions, he’s probably doing something wrong.

[Edit (9/24/2011) to add: Considering David Meyer’s comment below, I thought I should make clear that, while my interests and tastes lie with #3 above, I don’t mean to suggest that I endorse it as the most likely or most significant factor contributing to this particular phenomenon (or even the broader one regarding predictivity of rookie INT%). While I do find it meaningful and relevant that this result is consistent with and supportive of some of my wilder thoughts about interceptions, risk-taking, and quarterbacking, overall I think that macroscopic factors are more likely to be the driving force in this instance.]

For the record, here are the 13 QB’s and their relevant stats:

[table “7” not found /]